|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

「親密度を上げよう!」 (Shinmitsudo o Ageyou)

“Up Your Friendship!”

It seems Mahou Shoujo has finally found its stride. Although no deaths graced our eyes this week, the tension and suspense amped up significantly as the pieces for the first big fight(s) came together. It’s now only a question of when our magical girls decide to end this game.

The obvious conflict being teased is Swim Swim versus Sister Nana, for the sole reason that Swim Swim (again channelling Ruler’s teachings) wants to eliminate the strong threats (i.e. Weiss Winterprison) first. Quite the departure from Cranberry’s reasoning (i.e. Nana’s pacifistic interference), but it certainly makes sense: kill off the strong and the weak are easy pickings. I really have underestimated Swim Swim and her abilities, although it remains to be seen how the girl functions on the defensive—that will be her true test. Whether Swim Swim will eliminate Sister Nana (and/or Weiss) or not next time is anyone’s guess, but both Nana and Weiss are bleeding death flags something fierce at this point. There will be a fight between these two groups, and someone will die.

On the other side we have a potential Kano-Mary showdown, a distinct possibility for no other reason than Mary’s capriciousness and Kano’s detestation of Mary. Couple that with the back story death flag Kano was saddled with this time (along with Top Speed’s continued emphasis on surviving six months) and I could see both easily coming to blows. Why Mary wants to meet Kano, however, is probably the most important reason. Mary’s request came out of nowhere and seemingly has no bearing on what both girls are doing. Personally though I’m betting on it having to do with Hardgore Alice, who definitely is proving to be a serious problem. We know Alice is not invulnerable (or else her bio card would have stated so), but the means to kill her remains unknown. Mary has proven that conventional means of murder have little impact, although I bet the concrete barrel trick would have worked if someone had properly welded the seams shut. The problem with Alice is that her objectives are frustratingly unclear. Alice obviously likes Koyuki (or else she wouldn’t have given Koyuki the rabbit foot), but we don’t know why (plus those freaky head tilts don’t help things). Furthermore Alice joined with Nana easily enough, but why she did is up in the air. I really have no clue what to think of Alice, but I do know she will be important in determining who ends up surviving this competition.

While this episode was mostly buildup, there was a particularly interesting bit story-wise. Mary’s discussion with Fav and her acceptance of Fav’s “job” seemingly indicates that Mary’s history with them goes back a ways too. Mary obviously knows something about what Fav (and Cranberry) are up to, but for whatever reason never joined up with them. Why Mary refused to could explain her isolation from the other girls, her devil-may-care attitude, and potentially serve as the gateway for understanding the true purpose of this twisted competition. We might not know what happens next, but guaranteed it will be bloody. Stay tuned boys and girls, next week is going to be wild.

Random Tidbits

I don’t recall ever seeing a magical girl series with this many adult aged “girls” before. Even the Peaky Angel twins (who I pinned as egotistical teenagers) are university age! No matter the opinion on Mahou Shoujo’s story, the series deserves credit for a surprisingly diverse cast.

Laughed hard at Fav blaming Magicaloid’s death on a slasher, guess that’s one way of describing it. Also Fav’s remarks stating magical girls can never go insane. Why would you ever doubt the puffball?

Mary might want to be careful if Kano’s back story is any indication of Kano’s fighting prowess.

Preview

|

I have a feeling that Swim Swim so closely trying to follow how Ruler did things could end up being her own undoing in the end, especially since the way Ruler did things is exactly what lead to her being overall betrayed to begin with.

Felt bad for Kano when seeing her past and being sexually harassed not just by boys in her class, but by her own mother’s FIFTH husband…yeah…

And yeah, at the moment, it seems like the only way to kill Alice would be to ambush and kill her while she’s not in magical girl form unless one of them has a power and/or item that could bypass Alice’s own ability, but damn, that whole “multi-kill” sequence was insane, and epic with how Alice just comes back.

man Mary was pretty brutal with Alice, but I don’t understand what Mary’s powers are, can she create any dun she wants?

so does Mary want to be friends with Koyuki? or is there something more?

Kano’s backstory was messed up but backstories tend to be deathflags in this show

so who will die next? Sister Nana, Winterprison, or Kano? It’s hard to tell what Mary want’s from Kano. Mary did make a deal with Fav to find out Magicaloid was killed by Mary

Mary’s power is to enhance any weapon. She stored her guns inside a magic bag.

Can’t be Ripple yet.

Oh my god….I swear this is the only show that keeps me at the edge of my seat this season. It’s like one minute I go “The hell is happening??!!!” and then a minute later “THE F JUST HAPPEN??!!” Man I love how intense this show gets along with the tension it leaves at points. Seeing Mary go overkill on Alice was awesome and freaking Kano’s backstory….man her mom just sucks. Like seriously, I bet her mom is so delusional that she doesn’t realize what she’s doing is affecting her daughter (along with hooking up with pedophiles). Seeing Kano just wail on her classmates and the pedo was the icing on the cake.

Laughed hard at Fav blaming Magicaloid’s death on a slasher, guess that’s one way of describing it.”\

wow I screwed up that html tag horribly.

Anyway, I think my favorite part of this episode was when Nana/Winterperson left Koyuki alone with Alice, who then chased Koyuki around like a serial killer.

Something about Koyuki’s awkward “hahah… gotta go” made that scene 1000x funnier

Well, it has been firmly etablished that nither Nana nor Winterprison are the smartest.

Nana is dumb certainly, but I get the feeling Weiss is smarter than she looks. Weiss really seems like she plays along with Nana’s eccentricities, probably because she has a thing for cute faces 😛

So the “cowgirl” had the ability to draw up any types of fire arms, Hmm i wonder…do we see this kind of ability before, oh yeah it reminds me of this…-LOSER- i mean this girl.

Show Spoiler ▼

That actually came from her “Hammer-Space” bag-item she bought from Fav. That isn’t her power.

Yeah, I think her power is something to do with perfect aim

Mary’s power is to enhance whatever weapon she is using.

SO many deathflags, the series is like death flags the animations.

The foreboding atmosphere is great but a bit tiring to watch lol, I keep expecting everyone to die horribly at any moment.

9 characters has a chance to die next episode….place bets on everyone, no spoiler allowed.

This looked sop much like Terminator T-1000 deja-vu…

I am not sure even dropped into molten metal Hardgore Alice would not collect herself back… since supernatural is involved. Drop a nuke on Cthulu and he reappears 2 weeks later as strong and radioactive….

This kind of blatantly OP powers is usually coupled with some kind of inherent weakness, such as the proverbial Achilles heel. We just dont know it yet.

I am not sure what are the motivations of Alice, but for now she doesnt look like openly hostile towards Snow White, and possibly even trying to act as sort of creepy guardian.

Nana and Winterprison are mass-producing death flags. Since they just are about to run into Darth SwimSwim, I am pretty sure someone will die. SwimSwim is definitely someone you should not underestimate, with her tendency to overcome power with smarts.

Top speed should not drink until that 6 months pass, if you know what I mean…

Ripple getting backstory is a death flag of its own, but might be a red herring for once since she is one of few characters who could possibly deal with Calamity Mary in combat.

Speaking of Calamity Mary I would not put it beyond her to try and recruit Ripple as an ally. She seems to be appreciating cynical types, and is now aware of Alice being still alive out there and a threat. Since Cranberry is not the type to ally, and Winterprison being good-aligned with Nana, Ripple is most obvious choice. Also, it seems meeting Alice has taken some sanity points from Calamity. Fav, not the most reliable mascot…

If you go back and watch the first episode it shows you who Alice is and why she bought the lucky rabbit’s foot for Snow White.

Regarding the Achilles heel of Hardgore Alice…

Show Spoiler ▼

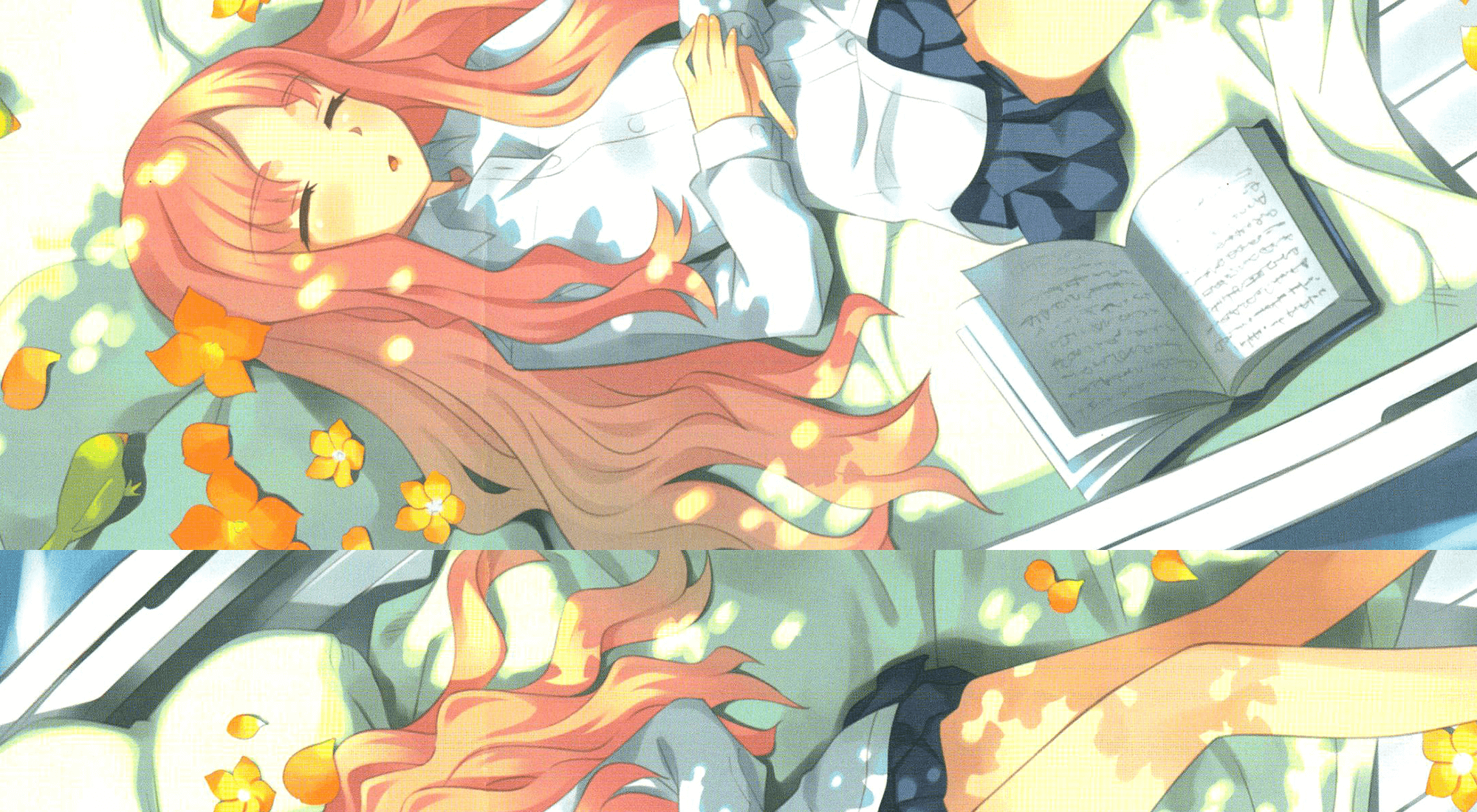

Magical boy versions of Snow White and Ripple, as drawn by Mahou’s LN illustrator, Maruino.

http://imgur.com/a/w2xmS

Makes you wonder what the others would be like as guys.

It seems we will have three opposing alliances: Nana, Swim-Swim, and Mary factions, given that Nana or Winterprison was not killed next episode. Mary calling two girls is probably due to her realization that she is not the strongest anymore so she needs more allies (or lackies) to replace Magicaloid. Candberry is like a wild card so she never joins anyone.

Mary probably wants to eliminate Alice more than anything, she (as far as we know) doesn’t see Cranberry as a threat, so her request to Kano definitely revolves around something else. If Mary wanted a lackey she likely would dragoon Top Speed given Top Speed’s deference around her.

Just basic stuff from observation, but I’ll put it in a spoiler tag anyway so people don’t cry out.

Show Spoiler ▼

I get the strange/horrible feeling that Alice like Snow White because she reminds her of the/a White Rabbit as well.

Alice is almost like a slime monster…. so magic attacks might be here weakness. O_o

Or should I say elemental attacks. Mary is only augmenting with magic physical powder with her weapons which it’s only breaking down Alice’s to a cellular level (probably freaking hurts as well). But you need magic that can destroy those cells as well and break their powder she has to bind them back together.

I think Fav referring to Magicaloid’s death being caused by a slasher isn’t the most ludicrous, because in all honesty, Alice does remind me a little of Jason from the Friday the 13th films. Alice survives being exploded, in containment, and manages to reform herself, which Jason has gone through before. And kind of like in Freddy vs. Jason, Jason isn’t technically “bad.” He still kills, but is partially misguided to go after these other people and largely kills when provoked, which you could make the argument for being the same with Alice. I could also compare her “ectoplasm” form to be like Jason’s demon worm form from Final Friday, but not only would I be stretching by that point, but the less we talk about that movie, the better lol.

That comparison aside, I suppose I should have expected a slower episode after the big stuff happening after last week’s episode, but it still has some weird pacing. Like it does the thing with the “It was a dream…or was it?!” idea, but it’s strange because it did happen and it cuts to that after Koyuki had seen Magicaloid die and a headless Alice. So…what, did Koyuki walk away from that awkwardly, or Alice gave her the rabbit foot, Koyuki passed out and Alice took her back home? I don’t think Alice would have knowledge of where she lives, not by this point. It was a bizarre start, but everything else in the episode was still nice, slow but still exploring things in an interesting manner.

Also, following the backstory = death idea, I feel slight concerns for Ripple, but they explored Nana and Winterprison a little bit, at least showing them what their non-magical forms are like. I feel like there is some kind of threshold regarding how much is known before that flag triggers, but it’s an unknown variable. We’ll see by next episode if any of that is followed up or they put in a swerve to really throw us off, but nonetheless, it’ll be a big experience!

I’m also thinking Kano is not at risk of death (yet). Her and Mary coming to blows is unlikely given everything else being teased, but it’s certainly a possibility. Personally I’m betting on someone from Swim Swim’s group perishing next time.

It was mentioned in the novel that Alice took Koyuki back to her house by getting directions from Fav, after which she placed the rabbit’s foot in her hands and left.

Other omissions included a large crowd at the fountain lights event, Ripple’s internal monologue about Top Speed’s cooking being top notch, and Hardgore Alice’s plans of scouting out around Snow White’s usual meeting spots to make sure they were safe before meeting Sister Nana, which Calamity Mary was waiting in ambush for Snow White, instead of planning to ambush Alice.

I don’t see Ripple going until the end, with all this 6 months thing I can imagine Mary might get Top Speed instead, which would leave Ripple devastated, finally joining Snow White or something.

I can imagine the Peaky Angels, Swim Swim and either Nana or Winter Prison being next, but not both together. Swim Swim seems a bit obvious since doing Ruler’s thing isn’t a good path.

I really hope Tama, Koyuki and Snow White survive all this the most.

I meant Ripple, not Koyuki x2

I’m hoping it’s one (or both) the Peaky Angels, although I can easily see Swim Swim being the sacrifice. Having Weiss knocked out this fast would reduce the “good guy” powerhouses to Kano basically. Such a move would require some serious plot twisting to explain how Koyuki and Nana then end up surviving against the likes of Cranberry and Mary. Alice cannot be the only trump card IMO, that would make her a little too OP.

Totally love Hardgore Alice, being an undead and have indifferent attitude(she doesn’t even say ouch when she’s being shot at) plus the head tilt. Giving an item that cost your life just because you ‘feel like it’ just makes her better. Though she probably just doesn’t want to say the truth. As for how she can be defeated, I don’t think Alice can die, unless she wants to. Looking forward to Swim Swim team vs Sister Nana team next week. I expect that Swim Swim will win it with her skill to move through anything combined with that spear.

Mary calling Ripple is most likely part of her pact with fav. She is more competent than I expected: somehow got some background information on fav, probably by using her black marked contacts. On the other hand using this information to blackmail him is not the smartest thing to do. I feel that fav is setting up a situation to get rid of her. Most likely that meeting with Ripple will quickly escalate and Mary won’t survive it.

I like Tama the most but for sure she is the low hanging fruit on the tree if someone wants to knock off another magical girl. I’m pretty sure SwimSwim is a little kid so I don’t want to see her die. Top Speed is either pregnant or has a terminal illness and only has 6 months to live so If she is pregnant I would not wanna see her die either. Also don’t want to see Alice, Snow White, or Sister Nana go.

One last thing… is it possible the lucky rabbit’s foot lets you bring back one dead person and she will bring back La Pucelle?